tl;dr: In the data visualization community, there are those who believe that there are universal rules such as “Never use pie charts”, or, “Always include zero in a chart’s scale”, and then there are those who believe that there are no universal rules that apply in all situations, only general principles that must be adapted to the specific situation at hand based on judgment and experience. I propose a third possibility, which is that many common dataviz design decisions can be codified as formal rules that apply in all situations, it’s just that those rules tend to be too complex to be expressed as single sentences. They can, however, be expressed as relatively simple decision trees that can reliably guide practitioners of any experience level to the best design choice.

In recent years, there’s been a growing movement within the data visualization community of well-known experts pushing back against what are regarded by many as universal rules of data visualization, such as…

- “Never use pie charts.”

- “It’s always OK to start a chart’s quantitative scale at something other than zero, as long as it’s not a bar chart.”

- “High-precision ways of showing data are always better than low-precision ones.”

Those who object to these being universal rules argue that they all have exceptions, i.e., that there are situations in which it clearly makes sense to break them. I consider myself to be part of that movement. I can point to scenarios in which I consider that these rules—and many others—should be broken.

I depart from my fellow partisans, however, when it comes to what they say next (what movement would be complete without splintering into competing sub-movements?)

Those who believe that there are no universal rules tend to go on to assert that dataviz design decisions are too complex, subjective, or situation dependent to be captured as rules that always apply in every situation. Their battle cry is, “It depends!”, the implication being that practitioners can’t learn how to make these decisions by learning rules. They just need to develop judgment over time through practice and experience, and by studying others’ charts as examples.

“That sounds reasonable…”

…but I disagree. I think that many common dataviz design decisions can be codified as formal rules that can be followed by practitioners of any experience level to make the best possible design choice in any situation, without exception. The bad news is that these rules can’t be captured in simple “always/never” sentences, like the rule examples above. The good news is that many of them can be captured in relatively simple decision trees.

“Show me!”

I’ll show sample decision trees for two common-but-tricky dataviz design decisions in a moment, but I need to mention an important caveat first. These decision trees aren’t intended to be self-explanatory. They’re excerpted from my Practical Charts course, in which I explain the rationales for why they’re designed as they are via examples and scenarios. While you can probably guess how to interpret these trees, you probably won’t understand why they’re structured as they are without the context of the course. That’s OK, though, because that’s not necessary for the purposes of this article, so don’t worry too much if you don’t understand why they’re structured as they are.

Now that that’s out of the way, here’s a sample decision tree that guides practitioners of any experience level when determining whether a pie chart or an alternative chart type is the best choice in virtually any situation:

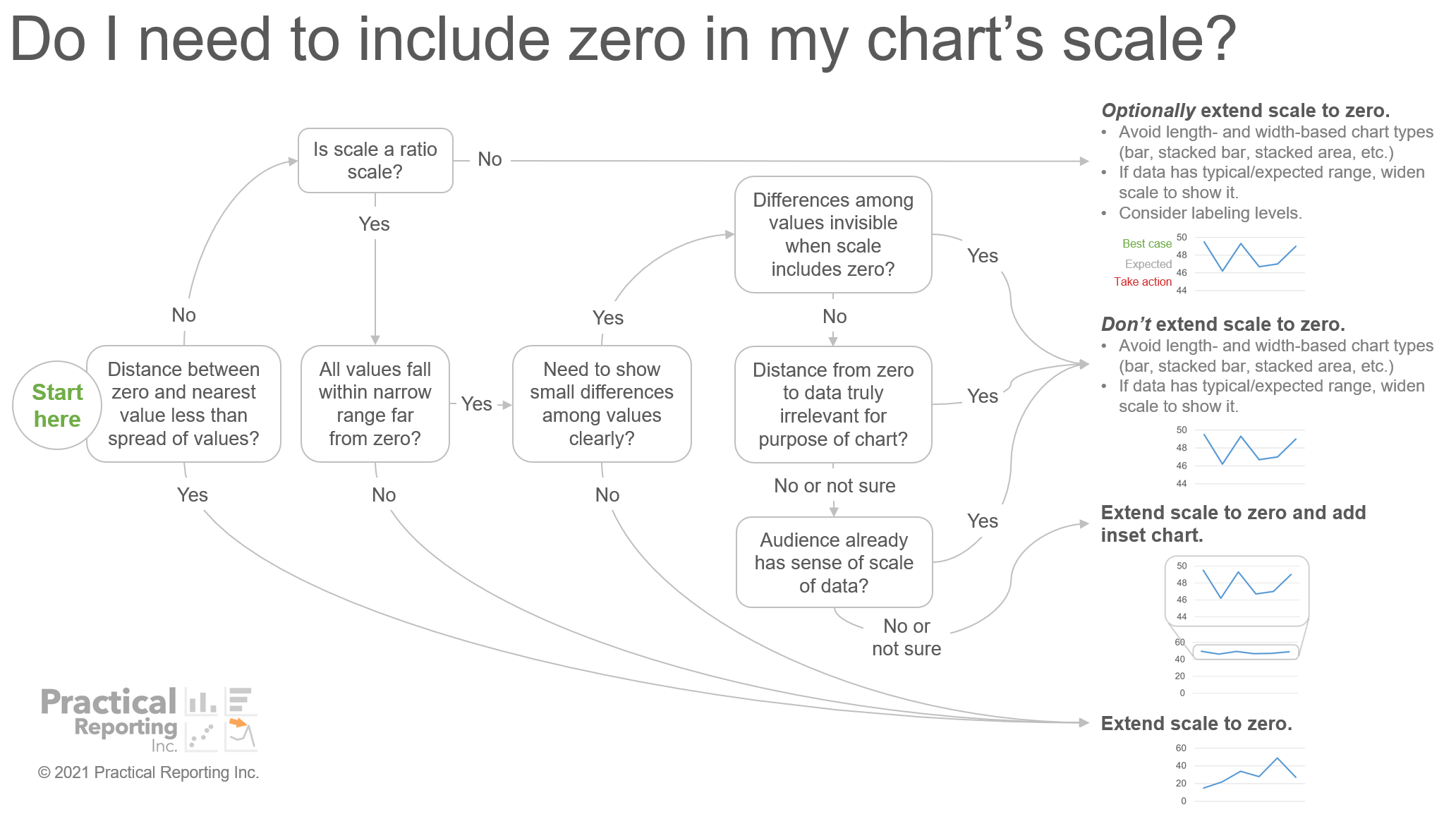

The decision tree below is for another common-but-tricky design decision: determining when it is or isn’t necessary to include zero in a chart’s quantitative scale.

“I’ve seen ‘chart chooser’ diagrams like the pie chart one before.”

Yes, there are many other “chart chooser” diagrams and tools floating around out there, but the ones that I’ve seen omit many relevant decision factors. For example, other tools that I’ve seen take, at most, two or three factors (e.g., if the values are parts of a total, how many parts there are, etc.) into account when determining when to use a pie chart or an alternative chart type. In the pie chart decision tree above, however, there are eight decision factors. If a tool takes just two or three decision factors into account, I’m not sure how it could recommend reliable design choices in a wide variety of situations.

I think this oversimplification is why, with other tools that I’ve seen, it’s easy to come up with realistic scenarios in which following the tool’s recommendation would yield a design choice that no one (including, almost certainly, the creator of the tool) would consider to be a good choice. More often, though, such tools don’t actually recommend a specific chart type for the situation at hand, they recommend a group of chart types, and then advise chart creators to “use their judgment” to choose from within that group. “Use your judgment” isn’t helpful, though—especially for less experienced practitioners—and I think that it’s possible to offer more specific, helpful guidance than that.

“But how do you know that your decision trees always point to the truly best design choice in any situation?”

Well, I can’t be 100 percent certain, but I’ve shown the pie chart decision tree to thousands of workshop participants and blog post readers from a wide variety of demographics and backgrounds, and implored them to tell me if they can think of a single scenario in which it wouldn’t point to what they’d consider to be the best design choice for that scenario. When I started showing early drafts of that particular decision tree, people did find scenarios in which it wouldn’t recommend what they considered to be the best choice, and I made several tweaks to the tree as a result (thanks, guys!). It’s been at least a year since anyone’s spotted an exception, though, so I think it’s pretty solid at this point. If you spot an exception, however, please tell me so that I can revise the tree!

The “Do I need to include zero in my chart’s scale?” decision tree, on the other hand, is hot off the presses and will likely get a few tweaks as people spot scenarios in which it wouldn’t recommend what they consider to be the best design choice. I expect it to be pretty solid after a few hundred people have scrutinized it and I’ve shored up any exceptions. If you want to understand the rationales behind that particular decision tree, here’s the very long explanation.

I’m guessing that some readers might object that these decision trees just codify my own personal preferences or biases. That could, of course, be true, but it seems unlikely since no one has pointed to anything that they personally consider to be an exception in quite some time, despite my supplications to do so. I suspect that these decision trees just codify practices that most experienced practitioners agree with, but that are complicated to formalize in a robust way, in much the same way that it’s tricky to codify the rules of English grammar, but, once those rules are codified in a robust way, experienced speakers tend to agree with them.

“Are you saying that all dataviz design decisions can be codified as rules?”

No. There are plenty of design decisions that are too complex, creative, or situation specific to be codified as universal rules. For example, I don’t think it’s possible to codify specific rules for determining which parts of a chart to visually highlight, or for formulating effective chart titles. For those types of decisions, we can only offer general principles and high-level advice such as, “It’s often (but not always) useful to put the main message of a chart in its title.” For the many design decisions that can be codified as rules (my Practical Charts course and upcoming book contain over 20 such tools), however, there are significant benefits in doing so.

Writing provides a useful analogy here. Can every aspect of writing be codified as formal rules that will allow anyone to write the next best seller? Obviously not. Does that mean that writing doesn’t have any codified rules that apply in all situations? No, writing has many such rules, from basic spelling and grammar to the logical sequencing of ideas. For writing decisions that can be codified as universal rules (e.g., when to use “they’re”, “there”, or “their”), providing practitioners with specific guidance for those decisions instead of telling them to “use their judgment” makes it far easier for them to become competent quickly and to make the best choice every time.

“Are these rules truly unbreakable? Do they really apply in every situation?”

Just like writing, they’re are times when it makes sense to break formal rules, but there limited to times when the author is exercising artistic license (see what I did their?). When creating data art, then, it can absolutely make sense to knowingly break the rules. The keyword in that last sentence, though, is knowingly. Deliberately breaking rules for artistic reasons can be extremely effective, but breaking them because you didn’t know about them in the first place will reliably produce charts that are unobvious, confusing, ambiguous, and/or misleading, just as written prose that breaks the basic rules of writing will be unobvious, confusing, ambiguous, and/or misleading (how many times did you have to re-read the first sentence in this paragraph?).

“What about data visualization research? Isn’t that supposed to tell us what ‘the rules’ are?”

If a data visualization research study finds that people can compare the parts of a total with one another more precisely as a bar chart than as a pie chart, that’s important information to know when choosing chart types in practice. Does that mean that practitioners should always choose bar charts over pie charts? No, because, as the pie chart decision tree above shows, the ability to compare the parts of a total with one another precisely is just one of eight decision factors that go into determining whether to use a bar chart or a pie chart in a given situation. (I’ll publish a whole article on pie charts–yes, apparently, the world needs yet another pie chart article–when I’ve braced myself sufficiently for the inevitable backlash.)

Data visualization research findings are crucial inputs into making design decisions, but they don’t typically point to specific design choices in specific situations. They tend to focus on just one or a few factors, such as how precisely people can compare quantities in a few different types of visualizations, how likely people are to misinterpret the underlying data in a few specific types of views, or how rapidly people can interpret a few different ways of showing the same data. This is why many studies caution practitioners to “use their judgment” when extrapolating the study’s findings to real-world chart design decisions.

In order to develop practical guidance for questions like, “Exactly when should I use a bar chart instead of a pie chart?”, multiple research findings need to be factored into a single decision tool that takes all relevant decision factors into account. I believe that the decision trees in my Practical Charts course accomplish that, but tell me on LinkedIn or Twitter if you disagree!

“Do you think that research will eventually resolve most best practice controversies?”

Many people seem to assume that controversial dataviz design best practices (pie charts, anyone?) have remained controversial or in murky “it depends” territory for decades because there hasn’t been enough research into them, and that a deeper understanding of how humans process visual information will eventually settle these long-running debates once and for all.

While that’s undoubtedly the case for some of these controversies, I suspect that many of them evaded consensus because we hadn’t identified all of the factors that go into making those design decisions, and hadn’t sorted out the complex ways that those factors interact when making a final design choice in practice. In other words, we didn’t have robust decision trees for them. When those decision trees are in hand, the controversy tends to dissipate.

Can research produce these decision trees? Unfortunately, developing them is essentially a very tricky Boolean logic puzzle, and Boolean logic puzzles can’t be solved with lab experiments. They just need to be reasoned through—while factoring in research findings for decision conditions for which research is available—and then reality-checked with as many real-world audiences and in as many real-world situations as possible.

I think of developing these decision trees as analogous to developing grammar-checking rules for word-processing software. The main challenge isn’t that we have an insufficient understanding of how people process language, it’s that it’s hard to reverse-engineer rules that expert speakers of a language intuitively know, and then codify those rules in a way that’s robust enough that they make good grammatical recommendations automatically in any situation. The challenge isn’t that we lack research into how people process language, it’s that the rules of English grammar are a tricky logic problem to sort out, and no amount of lab experimentation with research subjects will solve that problem.

I’m not suggesting that data visualization research isn’t valuable or that it shouldn’t continue, however. Quite the opposite. Many decision trees can undoubtedly be improved by new research findings, and research findings are invaluable when making design choices that are too complex or situation-dependent to be codified in simple decision tools. Improving our fundamental understanding of how humans process visual information also has intrinsic value and many other practical applications.

“So, what does this mean when it comes to ‘universal rules of data visualization’, then?”

A few things, I think:

- It is possible to codify many—but not all—design decisions as rules that can be used by practitioners of any experience level to make the truly best design choice in any situation. These design decisions don’t ultimately come down to “it depends” or “use your judgment”.

- The rules for these types of design decisions can’t be expressed as single “always/never” sentences. Attempting to distill these decision trees into single sentences would result in the worst run-on sentences in history. And trying to shorten or simplify them would inevitably open up the possibility that following the rules would yield design choices in certain situations that wouldn’t be the best choices. The simplest possible expression of these rules, then, is as decision trees, not sentences.

- Many long-running best practice controversies can probably be resolved by moving from debating single-sentence rules to discussing decision trees. In data visualization best practice debates, people often argue for or against single-sentence positions, such as, “Never use pie charts”, or “Always include zero in the quantitative scale.” As I hope I’ve shown, these design decisions are too complex to be captured in a single sentence, so debates over single-sentence positions will never end because no single-sentence position can ever be correct in every situation. I’ve seen many workshop participants strongly disagree with one another on a given data visualization best practice, but then melt into agreement when walked through a decision tree that factors in everyone’s concerns and objections.

- Distilling universal data visualization rules is hard. While these decision trees may seem fairly straightforward, they were difficult to develop. Just realizing that decision trees of this type (as opposed to text, Venn diagrams, or other formats) were a good way to express these rules wasn’t obvious. I was only able to develop them after years of dataviz teaching and consulting, and after looking at many thousands of real-world charts to make sure that I was considering the widest possible spectrum of scenarios. Even with all that experience, figuring out which decision factors needed to be included in each tree and how to sequence and connect them was some of the most challenging, cognitively demanding work that I’ve ever done, and drew on my (now distant) experiences as a software developer.

I certainly didn’t invent the idea of using decision trees to codify and simplify potentially complex decisions, though. In medicine, for example, similar tools called “clinical pathways” are widely used to provide clinicians of any experience level with guidance on how to make the best possible medical decision in tricky-but-common situations:

“Now what?”

Like I said, I’m firmly in the “it depends” camp, but I think we can do better than that. I wrote this article to encourage my compatriots to go beyond “it depends” and start asking, “What, exactly, does it depend on, and can those factors be organized into codified rules that can be applied by practitioners of any experience level in any situation to guide them to the best design decision every time?” This isn’t an easy undertaking (it took me several years to develop the 20+ decision-making tools in my Practical Charts course), but I hope that I’ve convinced you that it’s worth the effort.

For some design decisions, we will be able to come up with relatively simple rules and tools which will make practitioners’ lives much easier and instantly improve the quality of their work. Other design decisions will turn out to be too complex, subjective, or situation dependent to codify as rules despite our best efforts, and that’s OK. We should at least try, though, since, if we don’t, we’re falling back onto “use your judgment”, or its cousins, “use common sense” and “choose the design choice that looks best to you”, none of which are helpful or reliable, especially to those who are early in their dataviz journeys.

If, after reading this article, you still feel that there are no universal rules that apply in all situations and that there will always be exceptions, tell me on LinkedIn or Twitter! I just ask, however, that you also provide at least one scenario—even a hypothetical one—in which a reality-tested decision tree would not yield what you consider to be the best design choice. If you can’t come up with even one such scenario, I’m going to struggle to agree that these design decisions can’t be codified as rules that work in any situation. If you can, great! Let’s update the relevant decision tree to account for it ?

As an independent educator and author, Nick Desbarats has taught data visualization and information dashboard design to thousands of professionals in over a dozen countries at organizations such as NASA, Bloomberg, The Central Bank of Tanzania, Visa, The United Nations, Yale University, Shopify, and the IRS, among many others. Nick is the first and only educator to be authorized by Stephen Few to teach his foundational data visualization and dashboard design courses, which he taught from 2014 until launching his own courses in 2019. His first book, Practical Charts, was published in 2023 and is an Amazon #1 Top New Release.

Information on Nick's upcoming training workshops and books can be found at https://www.practicalreporting.com/